In a move to strengthen online safety for younger users, Meta has announced a new update focused on early risk awareness for families using Instagram. The change is designed to help parents identify concerning patterns in teen behaviour without directly exposing private search details.

Meta said Instagram will start notifying parents when a supervised teen repeatedly searches for content related to suicide or self-harm within a short period. The company clarified that the feature is meant to flag potential warning signs rather than individual searches. The update expands existing teen safety tools on Instagram and will first roll out in select English-speaking markets, with wider availability planned later this year. Last year, all users under 18 were placed in a stricter 13+ setting that requires parental approval to change.

The notification feature will go live next week in Australia, Canada, the UK and the US. Meta did not specify the exact time window or number of searches that would trigger an alert.

Only parents who have enabled parental supervision will receive these notifications. Both the parent and the teen linked through supervision will be informed before the feature becomes active. If a teen repeatedly searches for phrases that promote suicide or self-harm, suggest intent to self-harm, or include related keywords, an alert will be sent.

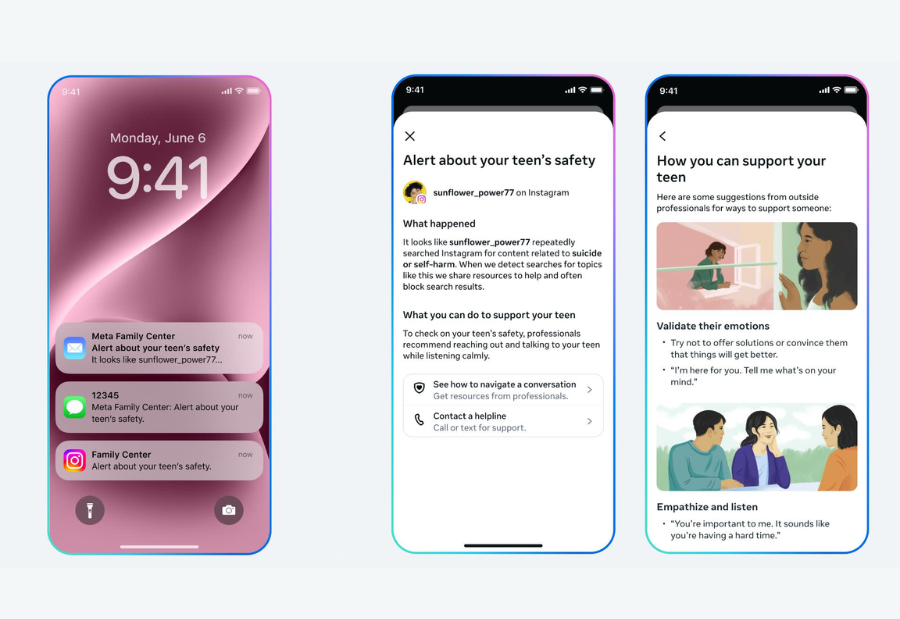

Meta said notifications may be delivered through email, text message, WhatsApp or in-app alerts, depending on available contact details. Opening the alert will display a full-screen message explaining the repeated search activity and offering expert guidance to help parents start a supportive conversation.

The company added that it already blocks searches clearly connected to suicide or self-harm and redirects users to support resources and helplines. Meta said it analysed search patterns and consulted its Suicide and Self-Harm Advisory Group to set a threshold that avoids excessive alerts. It acknowledged that some notifications may be sent even when there is no immediate risk but said the approach prioritises caution.

Meta also confirmed plans to introduce similar alerts later this year for certain teen interactions with its AI tools. These will notify parents if a teen attempts to discuss suicide or self-harm with the company’s AI systems.

Also read: Viksit Workforce for a Viksit Bharat

Do Follow: The Mainstream LinkedIn | The Mainstream Facebook | The Mainstream Youtube | The Mainstream Twitter

About us:

The Mainstream is a premier platform delivering the latest updates and informed perspectives across the technology business and cyber landscape. Built on research-driven, thought leadership and original intellectual property, The Mainstream also curates summits & conferences that convene decision makers to explore how technology reshapes industries and leadership. With a growing presence in India and globally across the Middle East, Africa, ASEAN, the USA, the UK and Australia, The Mainstream carries a vision to bring the latest happenings and insights to 8.2 billion people and to place technology at the centre of conversation for leaders navigating the future.